Understanding Isolation Levels in Galera Cluster

In an earlier article we have gone through the process of Certification Based Replication in Galera Cluster for MySQL and MariaDB. To recall the overview, Galera Cluster is a multi-master cluster setup that uses the Galera Replication Plugin for synchronous and certification based database replication. This article examines the concept of Isolation Levels in Galera Cluster, that is an inherent feature of the MySQL InnoDB database engine.

Monitoring Your MySQL Database Using mysqladmin

As with any other dynamic running process, the MySQL database also needs sustained management and administration of which monitoring is an important part. Database monitoring allows you to find out mis-configurations, under or over resource allocation, inefficient schema design, security issues, poor performance that include slowness, connection aborts, process hanging, partial resultsets etc. It is giving you an opportunity to fine tune your platform server, database server, its configuration and other performance factors. This article provides you an insight into monitoring your MySQL database through tracking and analyzing important database metrics, variables and logs using mysqladmin.

Working of Certification based Replication in Galera Cluster

In the earlier articles, we have covered the basics of Galera Cluster for MySQL and MariaDB and another article about MariaDB Galera Cluster in particular. To recall the overview of Galera Cluster – It is a synchronous multi-master cluster that uses the InnoDB storage engine (XtraDB also for MariaDB). It is actually the Galera replication plugin that extends the wsrep API of the underlying DBMS, MySQL or MariaDB. Galera Cluster uses Certification based synchronous replication in the multi master server setup. In this article we will look into the technical aspects of Galera Cluster Certification based replication functionality.

Read more

Is Cloud Database Good for Your Business?

You have heard lot about Cloud now-a-days – cloud computing, cloud storage, cloud database, etc. but have you thought about how cloud technology can influence your business, the positive ones and negative sides? Also it is worth discussing how you can utilize cloud for the development of your business, overcoming any disadvantages associated with it. This article is trying to define what cloud database implies for your business and its advantages and disadvantages. Also it is trying to provoke discussions about the suitability of cloud database for you.

Choosing between AWS RDS MySQL and Self Managed MySQL EC2

The Amazon Web Service (AWS) products AWS EC2 and AWS RDS comes with sophisticated technology features and capabilities that serve the needs for Web based computing and storage efficiently. When coming to the decision making process for selecting between AWS RDS and the self managed AWS ECS or any independent MySQL DBaaS for your MySQL database needs, there are some prominent factors to consider. These factors are crucial and affect the operational and economic aspects of your database deployment. This article provides a comprehensive overview of both products to help you understand the distinction between them for making a suitable and cost-effective decision.

Read more

MariaDB Galera Cluster

We have covered the basics about Galera Cluster in a previous article – Galera Cluster for MySQL and MariaDB. This article further goes into the Galera technology and discusses topics like:

1. Who provides Galera Cluster?

2. What is MariaDB Galera Cluster?

3. An overview of MariaDB Galera Cluster Setup.

Read more

Securing MySQL NDB Cluster

As discussed in a previous article about MySQL NDB Cluster, it is a database cluster setup based on the shared-nothing clustering and database sharding architecture. It is built on the NDB or NDBCLUSTER storage engine for MySQL DBMS. Also the 2nd article about MySQL NDB Cluster Installation outlined installation of the cluster through setting up its nodes – SQL node, data nodes and management node. This article discusses how to secure your MySQL NDB Cluster through the below perspectives:

1. Network security 2. Privilege system 3. Standard security procedures for the NDB cluster.Detailed information is available at MySQL Development Documentation about NDB Cluster Security

Read more

Why Your Business Need Database Versioning?

How database versioning boosts your business growth?

Applications are neither ideal nor perfect. Along with the dynamic implementation environments and rapid technology changes, certain features can become obsolete and some new ones might be needed. Workflows and processes in the application need to be changed. Bugs and vulnerabilities can be reported any time. Competitors may release a new feature that demands you to reciprocate with matching or better feature. The chances are huge for you to initiate, perform and deliver a stable minor or major version. A delay or absence in this can negatively affect the reputation and/or monetary attributes like market performance, profit etc. In these circumstances, it is essential for you to make available for your teams, tools that automates and simplifies processes as much as possible. Applications or services have code and databases are integral parts of any product. A properly version controlled database boosts and simplifies development and production deployment processes. It also helps in critical analysis, comparison and cross review of your application sets. The results give you new insights and directions for a new promising version release and you can focus on your business growth, since everything is automated.

Read more

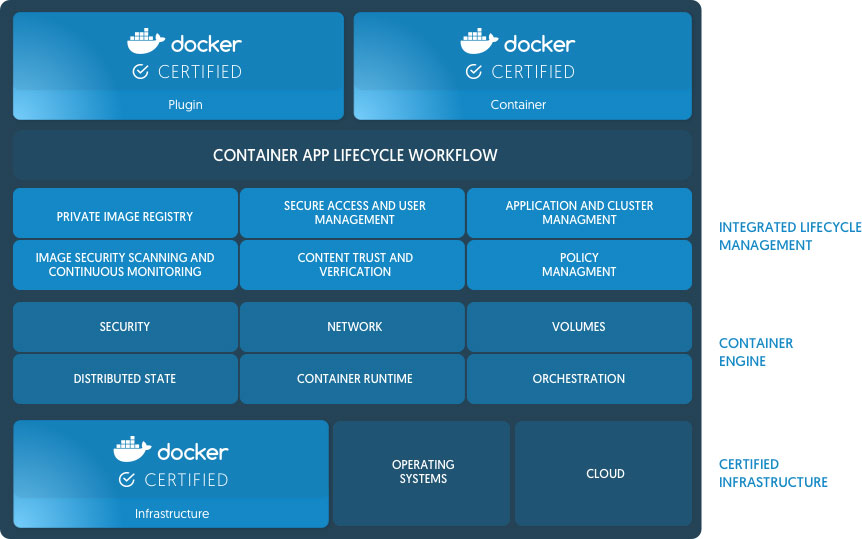

Docker Releases Enterprise Edition with Multi-Architecture and Multi-Tenant Support

On August 16, 2017 Docker announced the new release of Docker Enterprise Edition (EE). Docker EE is a Container-as-a-Service (CAAS) platform that provides a cross-platform, cross-architecture supply chain and deployment environment for software applications and microservices built to work in Windows, Linux and Cloud environments. The Docker container platform is integrated to the platform infrastructure to provide a native and optimized experience for the applications. Docker EE has the Docker tested and certified infrastructure, containers and plugins needed to run applications on Enterprise Platforms. The new Docker EE provides a container management platform that accommodates Windows, Linux and Mainframe apps and supports a broad range of infrastructure types.

How to Secure your MariaDB Database Server

At the time of writing this article, the current MariaDB 10.2 offers MariaDB TX Subscription to access the feature MariaDB MaxScale. MariaDB TX subscription offers high-end functionality like implementing business-critical production, test and QA deployments, management, monitoring and recovery tools, mission-critical patching and above all, access to the MariaDB Database Proxy, MaxScale.

Read more